AliExpress Wiki

Data Engineering Strategy: The Ultimate Guide to Building Scalable, Efficient Data Pipelines

A data engineering strategy ensures scalable, efficient data pipelines for modern businesses. It enables seamless data flow, supports real-time processing, and aligns technical systems with business goalscritical for innovation and operational excellence.

Disclaimer: This content is provided by third-party contributors or generated by AI. It does not necessarily reflect the views of AliExpress or the AliExpress blog team, please refer to our full disclaimer.

People also searched

Related Searches

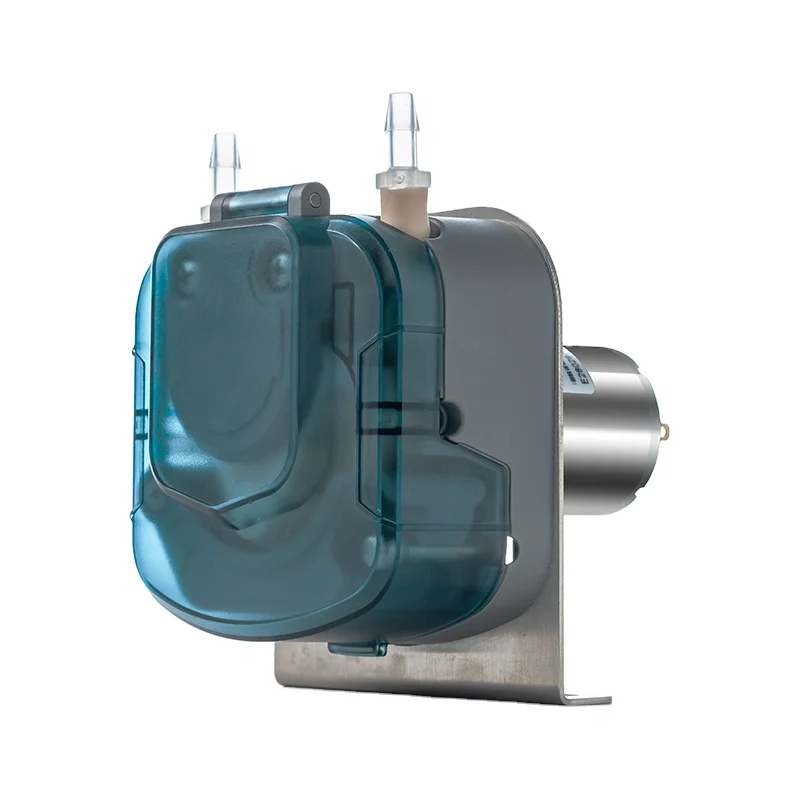

<h2> What Is a Data Engineering Strategy and Why Does It Matter for Modern Businesses? </h2> <a href="https://www.aliexpress.com/item/1005002039190202.html"> <img src="https://ae-pic-a1.aliexpress-media.com/kf/Sdfbc1d9629fa45019eac866779525840F.jpg" alt="Netac External SSD 2TB 1TB 250GB 500GB HDD Portable SSD USB 3.2 Hard Disk Type C Solid State Drive for Laptop Notebook Computer"> </a> In today’s data-driven world, a well-structured data engineering strategy is no longer a luxuryit’s a necessity. Whether you're a startup scaling rapidly or an enterprise managing petabytes of information, your ability to collect, process, store, and deliver data efficiently determines your competitive edge. But what exactly is a data engineering strategy? At its core, it’s a comprehensive plan that outlines how an organization will design, build, and manage its data infrastructure to support analytics, machine learning, real-time processing, and business intelligence. A data engineering strategy goes beyond just choosing tools or setting up databases. It defines the architecture, governance, scalability, reliability, and security of your data systems. It ensures that data flows seamlessly from source to destination, whether that’s from IoT sensors, customer transactions, or third-party APIs. For example, in a laboratory setting, where precision and consistency are critical, a data engineering strategy might involve integrating peristaltic pumpslike the BP1000 High Flow Rate Juice Syrup Beverage 12V 24V Small Laboratory Chemical Dosing 1000ml 12V Peristaltic Pumpwith real-time monitoring systems to track fluid delivery accuracy. This integration turns raw mechanical operations into actionable data points, enabling predictive maintenance, quality control, and process optimization. The importance of a data engineering strategy becomes even clearer when you consider the consequences of its absence. Without a clear roadmap, organizations often end up with data silos, inconsistent formats, duplicated efforts, and systems that break under load. These inefficiencies lead to delayed insights, poor decision-making, and wasted resources. A solid strategy prevents these pitfalls by establishing standardized data pipelines, defining clear ownership, and implementing automation and monitoring. Moreover, a data engineering strategy aligns technical execution with business goals. For instance, if your company aims to launch a new AI-powered recommendation engine, your data strategy must ensure that customer behavior data is collected in real time, cleaned consistently, and stored in a format suitable for model training. This requires not only technical expertise but also collaboration across teamsdata engineers, data scientists, product managers, and IT operations. In the context of AliExpress, where sellers and manufacturers rely on data to optimize inventory, shipping, and customer engagement, a data engineering strategy can be the difference between a thriving store and one that struggles to scale. By leveraging tools like peristaltic pumps with integrated data logging capabilities, businesses can monitor equipment performance, predict failures, and reduce downtimedirectly impacting operational efficiency and customer satisfaction. Ultimately, a data engineering strategy is not a one-time project but an evolving framework. It must adapt to new technologies, changing business needs, and growing data volumes. Whether you're managing a small lab experiment or a global e-commerce platform, investing in a robust data engineering strategy ensures that your data becomes a strategic assetnot a liability. <h2> How to Choose the Right Data Infrastructure for Your Data Engineering Strategy? </h2> <a href="https://www.aliexpress.com/item/1005008975601913.html"> <img src="https://ae-pic-a1.aliexpress-media.com/kf/Sdae57c8917764c9eae6a58178e653d0aV.jpg" alt="BP1000 High Flow Rate Juice Syrup Beverage 12v 24v Small Laboratory Chemical Dosing 1000ml 12v Peristaltic Pump"> </a> Selecting the right data infrastructure is one of the most critical decisions in building a successful data engineering strategy. The infrastructure you choose will determine how fast your data pipelines run, how easily you can scale, and how resilient your systems are to failures. But with so many optionscloud platforms, on-premise servers, hybrid models, and specialized hardwareit’s easy to feel overwhelmed. So how do you make the right choice? Start by understanding your data volume, velocity, and variety. If you're running a high-throughput laboratory operationsuch as using the BP1000 peristaltic pump for precise chemical dosingyou’re likely dealing with time-series data generated at regular intervals. This requires a system capable of handling real-time ingestion, low-latency processing, and reliable storage. In such cases, streaming platforms like Apache Kafka or AWS Kinesis may be more suitable than traditional batch processing systems. Next, consider your scalability needs. A data engineering strategy must support growth, whether that’s from 100 to 10,000 data points per minute. Cloud-based infrastructure offers inherent scalability, allowing you to dynamically allocate resources based on demand. Platforms like Google Cloud, AWS, and Azure provide managed services for data storage (e.g, BigQuery, S3, data processing (e.g, Dataflow, EMR, and orchestration (e.g, Airflow. These services reduce the operational burden on your team, letting you focus on building value rather than managing servers. Another key factor is integration capability. Your data infrastructure should seamlessly connect with existing toolswhether it’s a laboratory information management system (LIMS, an e-commerce platform like AliExpress, or a business intelligence dashboard. For example, the BP1000 peristaltic pump can be equipped with sensors and IoT modules that feed data into a central system. Your infrastructure must support these integrations, using APIs, message queues, or database connectors to ensure smooth data flow. Cost is also a major consideration. While cloud platforms offer pay-as-you-go pricing, they can become expensive at scale if not managed properly. On-premise solutions may offer lower long-term costs but require significant upfront investment and ongoing maintenance. A hybrid approachusing the cloud for peak workloads and on-premise systems for sensitive or regulated datacan offer a balanced solution. Finally, don’t overlook reliability and security. Your data infrastructure must include backup mechanisms, disaster recovery plans, and strong access controls. For a laboratory setting, where data integrity is crucial, this means ensuring that every chemical dosage recorded by the peristaltic pump is securely stored and auditable. Encryption, role-based access, and audit trails are non-negotiable. In summary, choosing the right data infrastructure isn’t just about picking the latest technologyit’s about aligning your technical stack with your business objectives, data characteristics, and operational constraints. Whether you're automating lab processes or optimizing e-commerce workflows on AliExpress, the right infrastructure empowers your data engineering strategy to deliver real, measurable value. <h2> How Can Data Engineering Strategy Improve Operational Efficiency in Manufacturing and Lab Environments? </h2> <a href="https://www.aliexpress.com/item/1005007017790469.html"> <img src="https://ae-pic-a1.aliexpress-media.com/kf/S3e2dd618855c47afb88c5f7ea6bb4e7eS.jpg" alt="Cricket Control Rolling Ball System Resistive Screen Plate PID Balance Ball For Arduino Robot DIY Kit for STM32 School Project"> </a> Operational efficiency is the lifeblood of both manufacturing and laboratory environments, where precision, consistency, and speed are paramount. A well-executed data engineering strategy can transform these operations by turning raw machine data into actionable intelligence. Consider the BP1000 High Flow Rate Peristaltic Pump used in chemical dosingthis device doesn’t just deliver fluids; it generates a stream of operational data that, when properly managed, can drive significant improvements. In a lab setting, every drop of reagent matters. By integrating the BP1000 pump with a data engineering pipeline, you can capture real-time metrics such as flow rate, pressure, motor speed, and cycle count. This data can be processed and analyzed to detect anomalieslike a sudden drop in flow that might indicate a clogged tube or failing motor. Early detection prevents costly errors, ensures reproducibility, and maintains compliance with regulatory standards. Beyond diagnostics, data engineering enables predictive maintenance. Instead of replacing pumps on a fixed schedule, you can use historical data to predict when a component is likely to fail. Machine learning models trained on pump performance data can flag potential issues before they occur, reducing unplanned downtime and extending equipment lifespan. This is especially valuable in high-throughput labs where even a few hours of downtime can delay critical experiments. In manufacturing, the benefits are equally profound. Data from production linessuch as temperature, pressure, and throughputcan be ingested into a centralized data warehouse. From there, engineers can identify bottlenecks, optimize workflows, and improve yield. For example, if a particular batch of syrup production shows inconsistent viscosity, data from the peristaltic pump can help trace the root cause to a specific flow rate deviation or temperature fluctuation. Moreover, a data engineering strategy supports real-time monitoring and control. By connecting the BP1000 pump to a dashboard via IoT protocols, operators can monitor performance from anywhere. Alerts can be triggered when parameters go out of range, enabling immediate corrective action. This level of visibility reduces human error and ensures consistent product quality. For businesses on platforms like AliExpress, operational efficiency translates directly into customer satisfaction. Faster production cycles mean quicker order fulfillment. Higher product consistency leads to fewer returns and better reviews. By leveraging data engineering to optimize equipment like peristaltic pumps, manufacturers can scale their operations without sacrificing quality. In essence, a data engineering strategy transforms passive equipment into intelligent assets. It turns data from a byproduct into a strategic resource. Whether you're dosing chemicals in a lab or producing beverages at scale, the ability to collect, analyze, and act on data is what separates high-performing operations from the rest. <h2> What Are the Key Components of a Scalable Data Engineering Strategy? </h2> <a href="https://www.aliexpress.com/item/1005008185604477.html"> <img src="https://ae-pic-a1.aliexpress-media.com/kf/A5a1c99c9a4854417acf6937e224a7daek.jpg" alt="BOOK DISSELLING TECHNICAL ANALYSIS ADVANCED STRATEGIES"> </a> Building a scalable data engineering strategy requires more than just good toolsit demands a well-architected system that can grow with your business. Scalability isn’t just about handling more data; it’s about maintaining performance, reliability, and cost-efficiency as your operations expand. So, what are the essential components that make a data engineering strategy truly scalable? First, modular architecture is crucial. Your data pipeline should be broken into discrete, reusable componentsdata ingestion, transformation, storage, and consumptioneach designed to function independently. This allows you to scale specific parts without overhauling the entire system. For instance, if your lab starts generating more sensor data from peristaltic pumps, you can scale the ingestion layer (e.g, using Kafka) without touching the analytics engine. Second, automated data pipelines are a cornerstone of scalability. Manual processes are error-prone and time-consuming. By using orchestration tools like Apache Airflow or AWS Step Functions, you can automate workflowsfrom data extraction to validation and loading. This ensures consistency and frees up engineers to focus on innovation rather than repetitive tasks. Third, data quality and governance must be baked into the strategy from the start. As data volume grows, so does the risk of inconsistencies, duplicates, and missing values. Implementing data validation rules, lineage tracking, and metadata management ensures that your data remains trustworthy at scale. For example, every chemical dosage recorded by the BP1000 pump should be tagged with timestamp, operator ID, and batch numberenabling full traceability. Fourth, cloud-native design enhances scalability. Cloud platforms offer elastic compute and storage, allowing you to scale up during peak demand and scale down when idle. Services like AWS Lambda for serverless processing or Google BigQuery for petabyte-scale analytics eliminate the need for capacity planning and reduce infrastructure costs. Finally, monitoring and observability are essential. A scalable system must be visible and controllable. Implement logging, metrics, and alerting across all components. If a peristaltic pump’s data stream slows down, your system should detect it instantly and notify the right team. Tools like Prometheus, Grafana, or Datadog provide real-time insights into system health. Together, these components form a resilient, future-proof data engineering strategy. Whether you're managing a single lab device or a global data network, scalability ensures that your systems grow with your ambitions. <h2> How Does a Data Engineering Strategy Differ from Data Science or Data Analytics? </h2> While data science, data analytics, and data engineering are closely related, they serve distinct roles in the data lifecycle. A data engineering strategy focuses on the foundationbuilding and maintaining the systems that make data usable. In contrast, data science and analytics are about extracting insights and value from that data. Data engineering is the backbone. It’s responsible for designing data pipelines, ensuring data quality, and enabling efficient storage and retrieval. For example, the BP1000 peristaltic pump generates continuous data streams. A data engineer ensures this data is captured reliably, stored in a structured format, and made accessible to downstream systems. Data science, on the other hand, uses that data to build modelspredicting equipment failure, optimizing dosing schedules, or forecasting demand. A data scientist might train a machine learning model on historical pump performance to predict maintenance needs. Data analytics focuses on reporting and visualization. Analysts use processed data to generate dashboards, KPIs, and business insights. For instance, a lab manager might use analytics to track average flow rate over time and identify trends. A strong data engineering strategy enables both data science and analytics by providing clean, timely, and well-documented data. Without it, even the most advanced models and reports are built on shaky ground. In short, data engineering is the enabler; data science and analytics are the users.