AliExpress Wiki

Master Docker Build Python: The Ultimate Guide for Developers and DevOps Engineers

Master Docker Build Python with this comprehensive guide. Learn to create efficient, portable images using best practices, optimize build speed, choose the right base images, and deploy secure, scalable Python apps in production.

Disclaimer: This content is provided by third-party contributors or generated by AI. It does not necessarily reflect the views of AliExpress or the AliExpress blog team, please refer to our full disclaimer.

People also searched

Related Searches

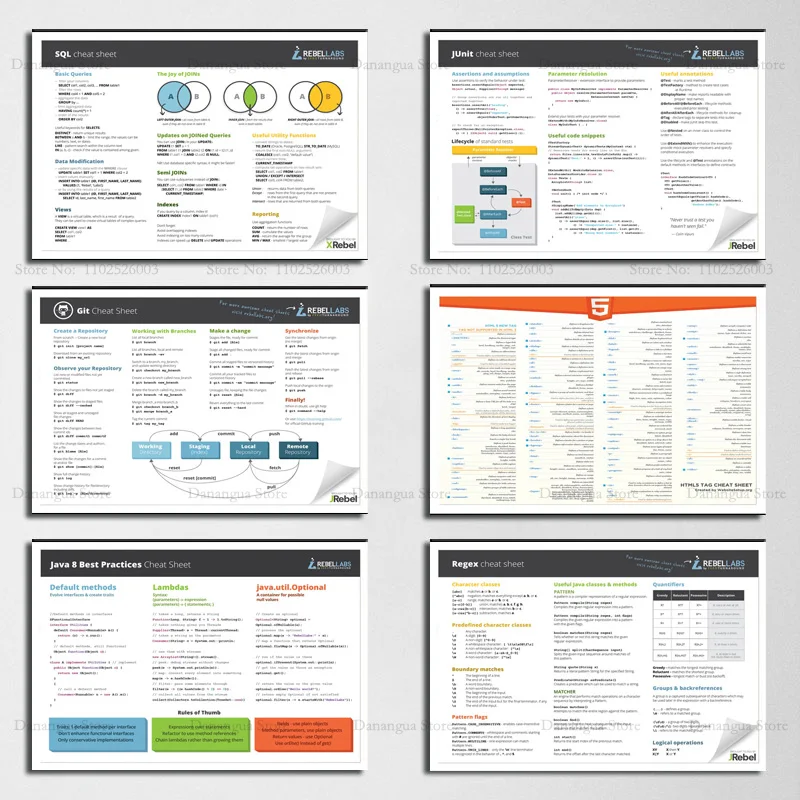

<h2> What Is Docker Build Python and Why Is It Essential for Modern Development? </h2> <a href="https://www.aliexpress.com/item/1005005478464236.html"> <img src="https://ae-pic-a1.aliexpress-media.com/kf/S6a3f81c8264c4d36862c78dd03604b92e.jpg" alt="Basic HTML5 Python Java SQL Cheat Sheet Regex Docker Posters Prints Wall Art Canvas Painting Pictures Photo Gift Room Home Decor"> </a> Docker Build Python refers to the process of creating a Docker image specifically tailored for running Python applications within a containerized environment. This technique has become a cornerstone in modern software development, especially for teams adopting DevOps practices, microservices architecture, and continuous integration/continuous deployment (CI/CD) pipelines. At its core, docker build is a command used to build an image from a Dockerfile, and when combined with Python, it enables developers to package their Python code, dependencies, and runtime environment into a portable, reproducible container. Why is this so important? Imagine writing a Python script that works perfectly on your local machine but fails on a colleague’s system due to differing library versions or OS configurations. Docker eliminates this inconsistency by encapsulating everything your application needsPython interpreter, pip packages, environment variables, and even system-level dependenciesinto a single, isolated container. This ensures that your application runs the same way, whether it’s on a developer’s laptop, a staging server, or a production cloud instance. The process begins with a Dockerfile, a text file that contains a series of instructions to build the image. For a Python application, this typically includes specifying a base image (likepython:3.11-slim, copying your application code into the container, installing dependencies via pip install -r requirements.txt, and defining the command to run the app (e.g,CMD [python, app.py. Once the Dockerfile is ready, you run docker build -t my-python-app to create the image. This approach is not just about portabilityit’s about scalability, security, and efficiency. Containers are lightweight compared to virtual machines, start up in seconds, and can be orchestrated at scale using tools like Kubernetes or Docker Compose. For Python developers working on web apps (e.g, Flask, Django, data science projects, or automation scripts, Docker Build Python provides a consistent foundation across development, testing, and deployment stages. Moreover, the rise of cloud-native development has made Docker an indispensable tool. Platforms like AWS, Google Cloud, and Azure all support Docker containers, making it easier to deploy Python applications in the cloud. With Docker Build Python, you can automate the entire deployment pipeline, reducing human error and accelerating time-to-market. Beyond technical benefits, the popularity of Docker Build Python is also fueled by the growing community and ecosystem. There are countless pre-built Python base images on Docker Hub, extensive documentation, and a wealth of tutorials and templates available. Whether you're a beginner learning containerization or an experienced DevOps engineer managing complex pipelines, mastering Docker Build Python is a critical skill in today’s software landscape. <h2> How to Choose the Right Docker Base Image for Python Applications? </h2> Selecting the appropriate base image for your Python application is a crucial step in the Docker Build Python process. The base image determines the operating system, Python version, and pre-installed tools that will be available in your container. Choosing the wrong one can lead to compatibility issues, bloated images, or security vulnerabilities. So, how do you make the right choice? First, consider the Python version you’re using. If your application relies on features introduced in Python 3.11 or later, you must use a base image that supports it. For example, python:3.11-slim is a lightweight option that includes only the essential packages, while python:3.11 includes additional tools like pip,setuptools, and wheel. If you're working with legacy code, you might need to stick with older versions likepython:3.8orpython:3.9. Next, evaluate the size of the base image. Smaller images mean faster downloads, quicker startup times, and reduced attack surface. The slim variants (e.g, python:3.11-slim) are ideal for production environments where performance and security are priorities. On the other hand, if you're in a development environment and need debugging tools or additional libraries, you might opt for the fullpython:3.11image. Another important factor is the underlying OS. The default base images are based on Debian, which is stable and widely supported. However, if you need a smaller footprint, consider using Alpine Linux-based images likepython:3.11-alpine. These are significantly smaller but come with trade-offsAlpine uses musl instead of glibc, which can cause compatibility issues with some Python packages (especially those with C extensions. Always test your application thoroughly when using Alpine. Security is also a major concern. Base images should be updated regularly to patch vulnerabilities. Use trusted sources like Docker Hub’s official images, which are maintained by the Python team. Avoid third-party or unverified images unless you’ve audited them thoroughly. Additionally, think about your application’s dependencies. If you’re using packages likenumpy, pandas, orscikit-learn, ensure the base image supports the required system libraries. Some images come with pre-installed system packages (like build-essential, which can simplify the installation of C-based Python packages. Finally, consider using multi-stage builds to further optimize your Dockerfile. This technique allows you to use a larger image during the build phase (e.g, with compilers and build tools) and then copy only the necessary artifacts into a minimal runtime image. This results in smaller, more secure final images. In summary, choosing the right base image involves balancing size, performance, compatibility, and security. For most use cases,python:3.11-slim strikes the best balance. But always tailor your choice to your specific project needs, and test rigorously before deploying to production. <h2> How to Optimize Docker Build Python for Faster and Smaller Images? </h2> Optimizing your Docker Build Python process is essential for improving performance, reducing deployment time, and minimizing resource usage. A poorly optimized Dockerfile can result in large images, slow builds, and increased attack surface. Here are several proven strategies to make your Docker builds faster and more efficient. First, leverage multi-stage builds. This technique separates the build process from the runtime environment. In the first stage, you use a full-featured image (like python:3.11) to install dependencies, compile code, and run tests. In the second stage, you copy only the necessary files (e.g, compiled Python modules, static assets) into a minimal runtime image (likepython:3.11-slim. This drastically reduces the final image size. For example, a Django app might be built with python:3.11 but deployed with python:3.11-slim, cutting the image size by 70% or more. Second, optimize your Dockerfile structure. Docker uses a layer caching system, so the order of instructions matters. Place instructions that change least frequently at the top. For instance, copyrequirements.txtbefore copying your application code. This way, if only your code changes, Docker can reuse the cached layer for dependencies and skip re-installing them. Always use .dockerignore to exclude unnecessary files (like .git, __pycache__,tests) from being copied into the image. Third, use minimal base images. As mentioned earlier, python:3.11-slim or python:3.11-alpine are excellent choices. Alpine is especially useful for reducing image size, but be cautious with C extensions. If you must use Alpine, consider using musl-compatible versions of packages or switch to -no-cache-dir when installing pip packages. Fourth, minimize the number of layers. Each RUN,COPY, or ADD instruction creates a new layer. Combine multiple commands using && and to reduce layer count. For example: Dockerfile RUN apt-get update && apt-get install -y curl && rm -rf /var/lib/apt/lists/ Fifth, use build arguments to make your Dockerfile more flexible. For example, you can definePYTHON_VERSIONas a build argument so you can easily switch versions without modifying the Dockerfile. Sixth, enable buildkit for faster builds. Docker BuildKit is a modern build system that supports parallel builds, better caching, and more efficient layer handling. Enable it by settingDOCKER_BUILDKIT=1 in your environment. Lastly, scan your images for vulnerabilities using tools like Trivy or Clair. This ensures your final image is not only small and fast but also secure. By applying these optimization techniques, you can reduce build times from minutes to seconds, shrink image sizes from hundreds of MB to under 50 MB, and improve overall deployment reliability. <h2> What Are the Best Practices for Docker Build Python in Production Environments? </h2> Running Python applications in production with Docker requires more than just a working docker build commandit demands a disciplined approach to security, scalability, and observability. Here are the best practices every DevOps engineer and developer should follow when deploying Python apps using Docker. First, always use non-root users. Running containers as root is a major security risk. In your Dockerfile, create a dedicated user (e.g, appuser) and switch to it usingUSER appuser. This limits the damage if the container is compromised. Second, avoid hardcoding secrets. Never include API keys, passwords, or tokens in your Dockerfile or image. Instead, use environment variables via ENV or -env-fileduringdocker run. For production, integrate with secret management tools like HashiCorp Vault or AWS Secrets Manager. Third, use health checks. Define a HEALTHCHECK instruction in your Dockerfile to monitor your application’s status. For example, HEALTHCHECK CMD curl -fhttp://localhost:8000/|| exit 1ensures the container is responsive and can be automatically restarted if it fails. Fourth, implement proper logging. Your application should write logs tostdoutandstderr, which Docker captures and forwards to logging systems like Fluentd, ELK, or cloud-native solutions (e.g, AWS CloudWatch. Avoid writing logs to files inside the container unless absolutely necessary. Fifth, use .dockerignoreeffectively. Exclude development files, test directories, and sensitive data to reduce image size and prevent accidental exposure. Sixth, regularly update base images and dependencies. Use tools like Dependabot or Renovate to monitor and update yourrequirements.txtand base images automatically. This helps patch known vulnerabilities. Seventh, test your Docker images before deployment. Usedocker runwith -rm to test locally, and consider running integration tests inside the container. Eighth, use Docker Compose or Kubernetes for orchestrating multiple services. This is especially important for web apps with databases, message queues, or caching layers. Finally, monitor and log container performance. Use tools like Prometheus, Grafana, or Datadog to track CPU, memory, and network usage. Following these best practices ensures your Docker Build Python workflow is secure, reliable, and production-ready. <h2> How Does Docker Build Python Compare to Other Deployment Methods for Python Apps? </h2> When comparing Docker Build Python to other deployment methodssuch as virtual environments, bare-metal servers, or cloud functionsseveral key differences emerge in terms of portability, scalability, and maintenance. Virtual environments (e.g, venv,conda) are lightweight and great for local development, but they don’t solve the “it works on my machine” problem. They don’t package the OS or system dependencies, so deployment across different environments remains fragile. Bare-metal servers offer full control but require significant manual setup, configuration management (e.g, Ansible, Puppet, and ongoing maintenance. Scaling is slow and error-prone. Cloud functions (e.g, AWS Lambda, Google Cloud Functions) are serverless and cost-effective for small, stateless tasks, but they have strict size limits, cold start delays, and limited support for long-running processesmaking them unsuitable for full Python web apps. In contrast, Docker Build Python offers the best of both worlds: portability across environments, consistent behavior, fast scaling, and easy orchestration. It’s ideal for microservices, CI/CD pipelines, and cloud-native applications. While Docker adds a small overhead, the benefits in consistency, security, and developer productivity far outweigh the costs. For most Python applications today, Docker Build Python is the gold standard.