AliExpress Wiki

How to Read Files in Parallel Using Python: A Comprehensive Guide

How to read files in parallel using Python. Improve performance with multiprocessing, threading, and concurrent.futures. Learn efficient techniques for handling large datasets and multiple files.

Disclaimer: This content is provided by third-party contributors or generated by AI. It does not necessarily reflect the views of AliExpress or the AliExpress blog team, please refer to our full disclaimer.

People also searched

Related Searches

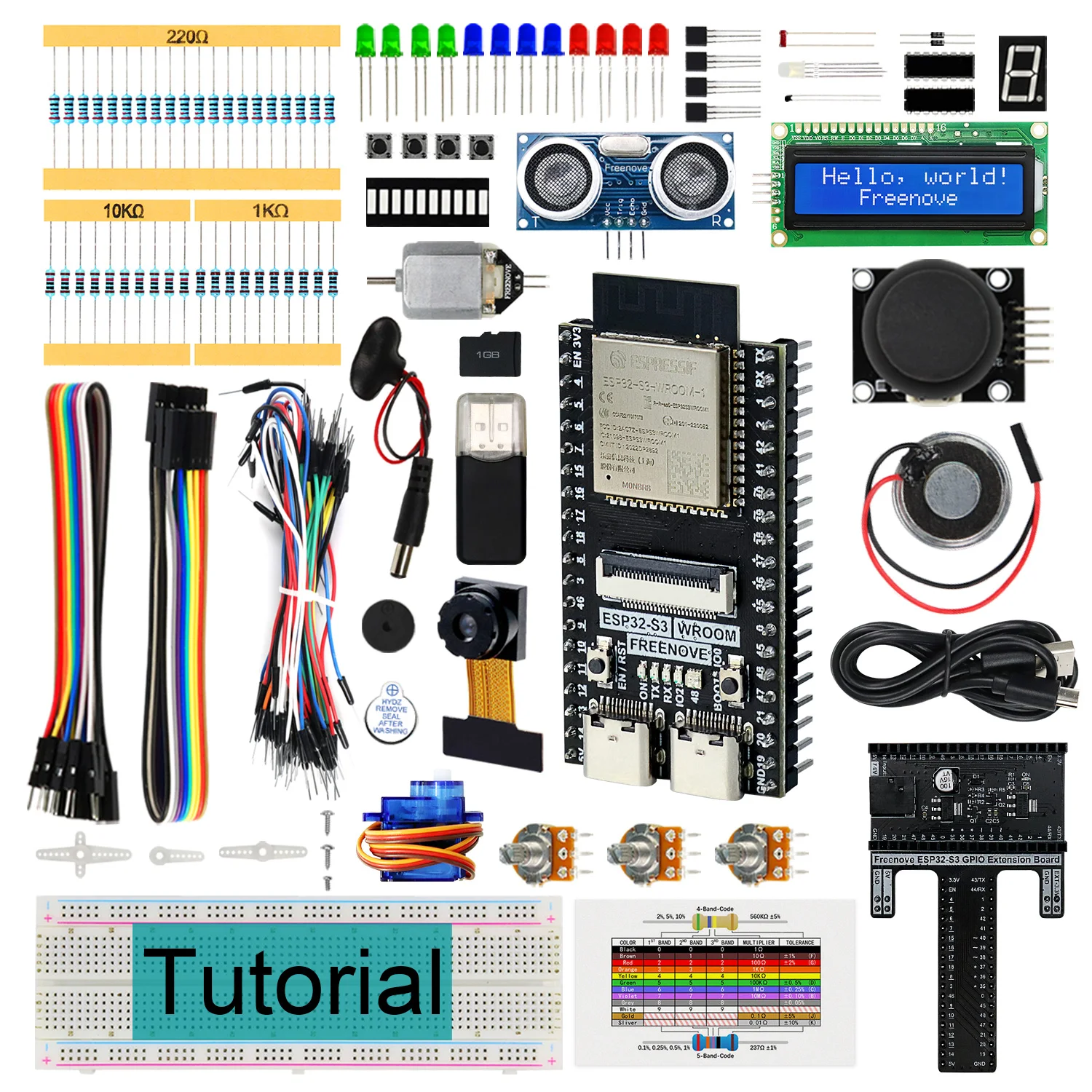

Python is a powerful and versatile programming language that is widely used for data processing, automation, and system scripting. One of the key challenges in handling large datasets or multiple files is the time it takes to read and process them sequentially. To overcome this, Python offers several methods to read files in parallel, significantly improving performance and efficiency. In this article, we will explore what it means to read files in parallel using Python, how to implement it, and why it's essential for modern data processing tasks. <h2> What is Python Read File in Parallel? </h2> <a href="https://www.aliexpress.com/item/1005004338406235.html"> <img src="https://ae-pic-a1.aliexpress-media.com/kf/S97f5c7b77e364bfe97191943af06e43bS.jpg" alt="Freenove Ultimate Starter Kit for BBC micro:bit V2, 316-Page Detailed Tutorial, 225 Items, 44 Projects, Blocks and Python Code"> </a> Python read file in parallel refers to the process of reading multiple files simultaneously rather than one after another. This technique is particularly useful when dealing with large volumes of data or when you need to process multiple files quickly. By leveraging Python's multiprocessing or threading capabilities, you can distribute the file-reading tasks across multiple CPU cores or threads, thereby reducing the overall execution time. The concept of parallel file reading is not limited to just reading the contents of a file; it also includes parsing, filtering, and transforming the data as it is being read. This is especially beneficial in applications such as log analysis, data aggregation, and batch processing, where speed and efficiency are critical. Python provides several libraries and modules that support parallel processing, including multiprocessing,concurrent.futures, and threading. Each of these has its own use cases and performance characteristics. For example, themultiprocessingmodule is ideal for CPU-bound tasks, whilethreadingis better suited for I/O-bound tasks such as reading files from disk or over a network. When implementing parallel file reading in Python, it's important to consider the file size, the number of files, and the system resources available. Reading too many files in parallel can lead to resource contention and may even cause the system to become unresponsive. Therefore, it's essential to strike a balance between the number of parallel tasks and the system's capacity to handle them. In addition to the built-in Python modules, there are also third-party libraries such asDaskandJoblib that provide higher-level abstractions for parallel computing. These libraries can simplify the process of reading and processing large datasets in parallel, making it easier for developers to write efficient and scalable code. <h2> How to Read Files in Parallel Using Python? </h2> <a href="https://www.aliexpress.com/item/1005004338416787.html"> <img src="https://ae-pic-a1.aliexpress-media.com/kf/S5e42373b01784082947b72f2cc1bead5t.jpg" alt="Freenove Super Starter Kit for BBC micro:bit V2, 266-Page Detailed Tutorial, 192 Items, 38 Projects, Blocks and Python Code"> </a> To read files in parallel using Python, you can use the concurrent.futures module, which provides a high-level interface for asynchronously executing callables. This module includes two main classes: ThreadPoolExecutor and ProcessPoolExecutor. TheThreadPoolExecutoris suitable for I/O-bound tasks, while theProcessPoolExecutoris better for CPU-bound tasks. Here's a simple example of how to useThreadPoolExecutorto read multiple files in parallel:python import concurrent.futures def read_file(file_path: with open(file_path, 'r) as file: return file.read) file_paths = 'file1.txt, 'file2.txt, 'file3.txt] with concurrent.futures.ThreadPoolExecutor) as executor: results = list(executor.map(read_file, file_paths) for result in results: print(result) In this example, the read_file function is called for each file path in the file_paths list. The ThreadPoolExecutor manages the threads and executes the function in parallel. The executor.map method returns an iterator that yields the results as they become available. If you're dealing with large files or CPU-intensive operations, you might want to use the ProcessPoolExecutor instead. This executor creates separate processes for each task, allowing you to take full advantage of multiple CPU cores. Here's an example using ProcessPoolExecutor:python import concurrent.futures def read_file(file_path: with open(file_path, 'r) as file: return file.read) file_paths = 'file1.txt, 'file2.txt, 'file3.txt] with concurrent.futures.ProcessPoolExecutor) as executor: results = list(executor.map(read_file, file_paths) for result in results: print(result) In this case, the ProcessPoolExecutor spawns new processes for each file, which can be more efficient for CPU-bound tasks. However, it's important to note that using multiple processes can increase memory usage, so it's essential to monitor system resources when working with large datasets. Another approach to parallel file reading is using the multiprocessing module directly. This module provides more control over the parallel execution and allows you to define custom worker functions. Here's an example using multiprocessing.Pool:python import multiprocessing def read_file(file_path: with open(file_path, 'r) as file: return file.read) file_paths = 'file1.txt, 'file2.txt, 'file3.txt] with multiprocessing.Pool) as pool: results = pool.map(read_file, file_paths) for result in results: print(result) In this example, the multiprocessing.Pool creates a pool of worker processes that execute the read_file function in parallel. The pool.map method distributes the file paths to the workers and collects the results. Regardless of the method you choose, it's important to handle exceptions and errors that may occur during parallel execution. You can use try-except blocks within the worker functions to catch and handle exceptions gracefully. Additionally, you should ensure that the files are properly closed after reading to avoid resource leaks. <h2> Why is Parallel File Reading Important in Python? </h2> <a href="https://www.aliexpress.com/item/1005004960559743.html"> <img src="https://ae-pic-a1.aliexpress-media.com/kf/S0747221977ac49a1a9fff81fc789faf8N.jpg" alt="Freenove Super Starter Kit for ESP32-S3-WROOM CAM Wireless, Python C Code, 539-Page Detailed Tutorial, 176 Items, 76 Projects"> </a> Parallel file reading is important in Python because it allows you to process large volumes of data more efficiently. In many real-world applications, such as log analysis, data aggregation, and batch processing, the ability to read and process multiple files simultaneously can significantly reduce the time required to complete a task. One of the main advantages of parallel file reading is that it can take full advantage of modern multi-core processors. By distributing the file-reading tasks across multiple CPU cores, you can reduce the overall execution time and improve the performance of your application. This is especially beneficial when dealing with large datasets or when you need to process multiple files quickly. Another advantage of parallel file reading is that it can help reduce the load on the system's I/O resources. When reading files sequentially, the system may become I/O-bound, meaning that the speed of the disk or network becomes the limiting factor. By reading files in parallel, you can distribute the I/O load and reduce the time spent waiting for data to be read from disk or over a network. In addition to improving performance, parallel file reading can also help improve the scalability of your application. As the number of files or the size of the data increases, the ability to process them in parallel becomes even more important. By designing your application to take advantage of parallel processing, you can ensure that it can handle larger datasets and more complex tasks without a significant increase in execution time. However, it's important to note that parallel file reading is not always the best solution for every problem. In some cases, the overhead of creating and managing multiple threads or processes may outweigh the benefits of parallel execution. Therefore, it's essential to evaluate the specific requirements of your application and choose the most appropriate approach for your use case. <h2> What are the Best Practices for Reading Files in Parallel in Python? </h2> <a href="https://www.aliexpress.com/item/1005004960527921.html"> <img src="https://ae-pic-a1.aliexpress-media.com/kf/S9267804cc96c47af8159fed6b268aa12Y.jpg" alt="Freenove Ultimate Starter Kit for ESP32-S3-WROOM CAM Wireless, Python C Java, 783-Page Detailed Tutorial, 243 Items 116 Projects"> </a> When reading files in parallel in Python, it's important to follow best practices to ensure that your code is efficient, reliable, and maintainable. One of the key best practices is to limit the number of parallel tasks to avoid overwhelming the system's resources. While it may be tempting to create as many threads or processes as possible, this can lead to resource contention and may even cause the system to become unresponsive. A good rule of thumb is to use the number of CPU cores available on the system as a guide for the number of parallel tasks. For example, if your system has four CPU cores, you may want to limit the number of parallel tasks to four or five. This helps ensure that the system can handle the workload without becoming overloaded. Another best practice is to use context managers to handle file operations. Context managers ensure that files are properly opened and closed, even if an exception occurs during the reading process. This helps prevent resource leaks and ensures that your code is more robust and reliable. It's also important to handle exceptions and errors that may occur during parallel execution. You can use try-except blocks within the worker functions to catch and handle exceptions gracefully. Additionally, you should ensure that the files are properly closed after reading to avoid resource leaks. When working with large datasets, it's also a good idea to use memory-efficient data structures and algorithms. For example, instead of reading the entire contents of a file into memory, you can process the data in chunks or use generators to yield the data as it is read. This helps reduce memory usage and ensures that your application can handle large datasets without running out of memory. Finally, it's important to test your code thoroughly to ensure that it works correctly and efficiently. You should test your code with different file sizes and numbers of files to ensure that it can handle a variety of scenarios. Additionally, you should monitor the system's performance and resource usage to identify any potential bottlenecks or issues. <h2> How to Choose the Right Python Kit for Parallel File Reading? </h2> When choosing a Python kit for parallel file reading, it's important to consider the specific requirements of your project and the capabilities of the kit. One of the most popular kits for Python development is the Freenove Super Starter Kit for ESP32-S3-WROOM CAM. This kit is designed for wireless applications and includes a comprehensive set of components and resources for Python and C programming. The Freenove Super Starter Kit for ESP32-S3-WROOM CAM is an excellent choice for developers who are looking to work with parallel file reading in Python. The kit includes a detailed 539-page tutorial that covers a wide range of topics, including Python programming, C code development, and hardware integration. With 176 items and 76 projects, the kit provides everything you need to get started with parallel file reading and other advanced Python applications. One of the key advantages of the Freenove Super Starter Kit is its support for both Python and C programming. This makes it a versatile choice for developers who want to work with both high-level and low-level programming languages. The kit also includes a wide range of components, such as sensors, actuators, and communication modules, which can be used to build complex applications that require parallel file reading. In addition to the hardware components, the Freenove Super Starter Kit also includes a comprehensive set of software tools and libraries. These tools and libraries make it easier to develop and test parallel file reading applications, and they also provide a solid foundation for more advanced projects. The kit's detailed tutorial is also a valuable resource for developers who are new to Python or parallel programming. When choosing a Python kit for parallel file reading, it's important to consider the level of support and documentation provided by the manufacturer. The Freenove Super Starter Kit is known for its excellent documentation and community support, which makes it a great choice for developers of all skill levels. Whether you're a beginner or an experienced developer, the Freenove Super Starter Kit provides the tools and resources you need to succeed with parallel file reading in Python.